https://bigr.io/wp-content/uploads/2025/03/Silent-Barrier-to-Healthcare-in-AI.png

445

622

Gaurav Mhetre

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Gaurav Mhetre2025-04-03 08:30:442025-04-03 08:40:45The Silent Barrier to AI in Healthcare is Data Infrastructure: A BigRio Perspective

https://bigr.io/wp-content/uploads/2025/03/Silent-Barrier-to-Healthcare-in-AI.png

445

622

Gaurav Mhetre

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Gaurav Mhetre2025-04-03 08:30:442025-04-03 08:40:45The Silent Barrier to AI in Healthcare is Data Infrastructure: A BigRio Perspective https://bigr.io/wp-content/uploads/2025/03/Silent-Barrier-to-Healthcare-in-AI.png

445

622

Gaurav Mhetre

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Gaurav Mhetre2025-04-03 08:30:442025-04-03 08:40:45The Silent Barrier to AI in Healthcare is Data Infrastructure: A BigRio Perspective

https://bigr.io/wp-content/uploads/2025/03/Silent-Barrier-to-Healthcare-in-AI.png

445

622

Gaurav Mhetre

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Gaurav Mhetre2025-04-03 08:30:442025-04-03 08:40:45The Silent Barrier to AI in Healthcare is Data Infrastructure: A BigRio Perspective https://bigr.io/wp-content/uploads/2025/02/Unlocking-the-Potential-of-Data-Integration-in-Digital-Health-scaled-e1738917166965.jpeg

1434

1434

Gaurav Mhetre

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Gaurav Mhetre2025-02-07 04:34:032025-03-27 07:58:59Unlocking the Potential of Data Integration in Digital Health

https://bigr.io/wp-content/uploads/2025/02/Unlocking-the-Potential-of-Data-Integration-in-Digital-Health-scaled-e1738917166965.jpeg

1434

1434

Gaurav Mhetre

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Gaurav Mhetre2025-02-07 04:34:032025-03-27 07:58:59Unlocking the Potential of Data Integration in Digital Health https://bigr.io/wp-content/uploads/2024/02/white-paper-small.jpg

819

819

Arpita

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Arpita2024-06-21 00:47:272024-08-26 05:35:11Harnessing Generative AI for Precision, Efficiency, and Personalized Patient Care

https://bigr.io/wp-content/uploads/2024/02/white-paper-small.jpg

819

819

Arpita

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Arpita2024-06-21 00:47:272024-08-26 05:35:11Harnessing Generative AI for Precision, Efficiency, and Personalized Patient Care https://bigr.io/wp-content/uploads/2024/06/Staffing_Whitepaper-01-1.png

1616

2000

Gaurav Mhetre

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Gaurav Mhetre2024-06-20 06:37:142024-12-18 00:38:25Accelerated Talent Acquisition

https://bigr.io/wp-content/uploads/2024/06/Staffing_Whitepaper-01-1.png

1616

2000

Gaurav Mhetre

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Gaurav Mhetre2024-06-20 06:37:142024-12-18 00:38:25Accelerated Talent Acquisition https://bigr.io/wp-content/uploads/2024/06/AIStudio_whitepaper-01.png

1616

2000

Gaurav Mhetre

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Gaurav Mhetre2024-06-20 06:37:012024-06-24 11:48:29AI Studio For Healthcare Startups

https://bigr.io/wp-content/uploads/2024/06/AIStudio_whitepaper-01.png

1616

2000

Gaurav Mhetre

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Gaurav Mhetre2024-06-20 06:37:012024-06-24 11:48:29AI Studio For Healthcare Startups https://bigr.io/wp-content/uploads/2024/06/ProductEngg_Whitepaper-01.png

1616

2000

Gaurav Mhetre

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Gaurav Mhetre2024-06-20 06:36:152025-03-20 06:28:37Product Engineering Services

https://bigr.io/wp-content/uploads/2024/06/ProductEngg_Whitepaper-01.png

1616

2000

Gaurav Mhetre

https://bigr.io/wp-content/uploads/2021/07/bigri-logo.png

Gaurav Mhetre2024-06-20 06:36:152025-03-20 06:28:37Product Engineering Services

TRANSFORMING YOUR BUSINESSES WITH THE POWER OF GENERATIVE AI

White PaperAs GAI reshapes the future of IT, BigRio wants to be your trusted partner in the ever-evolving digital landscape...

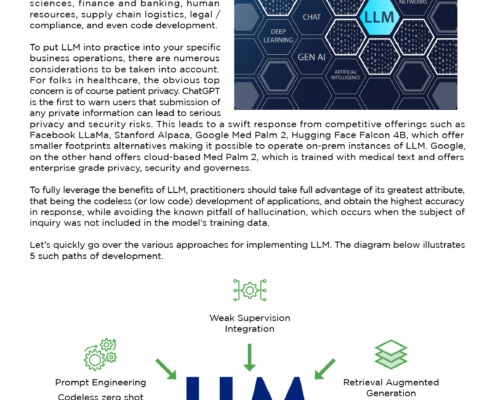

TRUTH OR HALLUCINATIONS IN THE AGE OF LLM

White PaperTo fully leverage the benefits of LLM, practitioners should take full advantage of its greatest attribute, that being the codeless development of applications...

CLINICAL TRIALS COHORT SELECTION NLP MODEL FOR A PHARMACEUTICAL COMPANY

White PaperOur efficient to cohort selection, one which has been thoroughly tested against actual client requests...

BigRio is a cutting-edge technology company committed to being your strategic partner in accelerating digital transformation and fostering innovation. With a relentless focus on delivering exceptional solutions, we empower businesses to thrive in the rapidly evolving digital landscape.

Company

Knowledge Center

GET IN TOUCH

-

Harvard Square, One Mifflin Place

Suite 400

Cambridge, MA 02138 - info@bigr.io